Installation and Deployment

Installation Guides

For installation instructions, please see the Installation tutorial for your platform.

Architecture

QuantRocket utilizes a Docker-based microservice architecture. Users who are unfamiliar with microservices or new to Docker may find it helpful to read the overview of QuantRocket's architecture.

License key

Activation

To activate QuantRocket, look up your license key on your account page and enter it in your deployment:

View your license

You can view the details of the currently installed license:

$ quantrocket license get

licensekey: XXXXXXXXXXXXXXXX

software_license:

account:

account_limit: XXXXXX USD

concurrent_install_limit: XX

license_type: Professional

user_limit: XX

>>> from quantrocket.license import get_license_profile

>>> get_license_profile()

{'licensekey': 'XXXXXXXXXXXXXXXX',

'software_license': {'license_type': 'Professional',

'user_limit': XX,

'concurrent_install_limit': XX,

'account': {'account_limit': 'XXXXXX USD'}}}

$ curl -X GET 'http://houston/license-service/license'

{"licensekey": "XXXXXXXXXXXXXXXX", "software_license": {"license_type": "Professional", "user_limit": XX, "concurrent_install_limit": XX, "account": {"account_limit": "XXXXXX USD"}}}

The license service will re-query your subscriptions and permissions every 10 minutes. If you make a change to your billing plan and want your deployment to see the change immediately, you can force a refresh:

Account limit validation

The account limit displayed in your license profile output applies to live trading using the blotter and to real-time data. The account limit does not apply to historical data collection, research, or backtesting. For advisor accounts, the account size is the sum of all master and sub-accounts.

Paper trading is not subject to the account limit, however paper trading requires that the live account limit has previously been validated. Thus before paper trading it is first necessary to connect your live account at least once and let the software validate it.

To validate your account limit if you have only connected your paper account:

- Switch to your live account using the instructions for your broker

- Wait approximately 1 minute. The software queries your account balance every minute whenever your broker is connected.

To verify that account validation has occurred, refresh your license profile. It should now display your account balance and whether the balance is under the account limit:

$ quantrocket license get --force-refresh

licensekey: XXXXXXXXXXXXXXXX

software_license:

account:

account_balance: 593953.42 USD

account_balance_details:

- Account: U12345

Currency: USD

NetLiquidation: 593953.42 USD

account_balance_under_limit: true

account_limit: XXXXXX USD

concurrent_install_limit: XX

license_type: Professional

user_limit: XX

>>> from quantrocket.license import get_license_profile

>>> get_license_profile(force_refresh=True)

{'licensekey': 'XXXXXXXXXXXXXXXX',

'software_license': {'license_type': 'Professional',

'user_limit': XX,

'concurrent_install_limit': XX,

'account': {'account_limit': 'XXXXXX USD',

'account_balance': '593953.42 USD',

'account_balance_under_limit': True,

'account_balance_details': [{'Account': 'U12345',

'Currency': 'USD',

'NetLiquidation': 593953.42}]}}}

$ curl -X GET 'http://houston/license-service/license?force_refresh=true'

{"licensekey": "XXXXXXXXXXXXXXXX", "software_license": {"license_type": "Professional", "user_limit": XX, "concurrent_install_limit": XX, "account": {"account_limit": "XXXXXX USD", "account_balance": "593953.42 USD", "account_balance_under_limit": true, "account_balance_details": [{"Account": "U12345", "Currency": "USD", "NetLiquidation": 593953.42}]}}}

If the command output is missing the account_balance and account_balance_under_limit keys, this indicates that the account limit has not yet been validated.

Now you can switch back to your paper account and begin paper trading.

User limit vs concurrent install limit

The output of your license profile displays your user limit and your concurrent install limit. User limit indicates the total number of distinct users who are licensed to use the software in any given month. Concurrent install limit indicates the total number of copies of the software that may be installed and running at any given time.

The concurrent install limit is set to (user limit + 1).

Connect from other applications

If you run other applications, you can connect them to your QuantRocket deployment for the purpose of querying data, submitting orders, etc.

Each remote connection to a cloud deployment counts against your plan's concurrent install limit. For example, if you run a single cloud deployment of QuantRocket and connect to it from a single remote application, this is counted as 2 concurrent installs, one for the deployment and one for the remote connection. (Connecting to a local deployment from a separate application running on your local machine does not count against the concurrent install limit.)

To utilize the Python API and/or CLI from outside of QuantRocket, install the client on the application or system you wish to connect from:

$ pip install 'quantrocket-client'

To ensure compatibility, the MAJOR.MINOR version of the client should match the MAJOR.MINOR version of your deployment. For example, if your deployment is version 2.1.x, you can install the latest 2.1.x client:

$ pip install 'quantrocket-client>=2.1,<2.2'

Don't forget to update your client version when you update your deployment version.

Next, set environment variables to tell the client how to connect to your QuantRocket deployment. For a cloud deployment, this means providing the deployment URL and credentials:

$

$ export HOUSTON_URL=https://quantrocket.123capital.com

$ export HOUSTON_USERNAME=myusername

$ export HOUSTON_PASSWORD=mypassword

$

$ [Environment]::SetEnvironmentVariable("HOUSTON_URL", "https://quantrocket.123capital.com", "User")

$ [Environment]::SetEnvironmentVariable("HOUSTON_USERNAME", "myusername", "User")

$ [Environment]::SetEnvironmentVariable("HOUSTON_PASSWORD", "mypassword", "User")

For connecting to a local deployment, only the URL is needed:

$

$ export HOUSTON_URL=http://localhost:1969

$

$ [Environment]::SetEnvironmentVariable("HOUSTON_URL", "http://localhost:1969", "User")

Environment variable syntax varies by operating system. Don't forget to make your environment variables persistent by adding them to .bashrc (Linux) or .profile (MacOS) and sourcing it (for example source ~/.bashrc), or restarting PowerShell (Windows).

Finally, test that it worked:

$ quantrocket houston ping

msg: hello from houston

>>> from quantrocket.houston import ping

>>> ping()

{u'msg': u'hello from houston'}

$ curl -u myusername:mypassword https://quantrocket.123capital.com/ping

{"msg": "hello from houston"}

To connect from applications running languages other than Python, you can skip the client installation and use the HTTP API directly.

Multi-user deployments

Hedge funds and other multi-user organizations can benefit from the ability to run more than one QuantRocket deployment.

The primary user interface for QuantRocket is JupyterLab, which is best suited for use by a single user at a time. While it is possible for multiple users to log in to the same QuantRocket cloud deployment, it is usually not ideal because they will be working in a shared JupyterLab environment, with a shared filesytem and notebooks, shared JupyterLab terminals and kernels, and shared compute resources. This will likely lead to stepping on each other's toes.

For hedge funds, a recommended deployment strategy is to run a primary deployment for data collection and live trading, and one or more research deployments (depending on subscription) for research and backtesting.

| Deployed to | How many | Connects to Brokers and Data Providers | Used for | Used by |

|---|

| Primary deployment | Cloud | 1 | Yes | Data collection, live trading | Sys admin / owner / manager |

| Research deployment(s) | Cloud or local | 1 or more | No | Research and backtesting | Quant researchers |

Collect data on the primary deployment and push it to S3. Once pushed, deep historical data can optionally be purged from the primary deployment, retaining only enough historical data to run live trading. Then, selectively pull databases from S3 onto the research deployment(s), where researchers analyze the data and run backtests.

Research deployments can be hosted in the cloud or run on the researcher's local workstation.

Each researcher's code, notebooks, and JupyterLab environment are isolated from those of other researchers. The code can be pushed to separate Git repositories, with sharing and access control managed on the Git repositories.

Broker and Data Connections

This section outlines how to connect to brokers and third-party data providers.

Because QuantRocket runs on your hardware, third-party credentials and API keys that you enter into the software are secure. They are encrypted at rest and never leave your deployment. They are used solely for connecting directly to the third-party API.

Interactive Brokers

IBKR Account Structure

Multiple logins and data concurrency

The structure of your Interactive Brokers (IBKR) account has a bearing on the speed with which you can collect real-time and historical data with QuantRocket. In short, the more IB Gateways you run, the more data you can collect. The basics of account structure and data concurrency are outlined below:

- All interaction with the IBKR servers, including real-time and historical data collection, is routed through IB Gateway, IBKR's slimmed-down version of Trader Workstation.

- IBKR imposes rate limits on the amount of historical and real-time data that can be received through IB Gateway.

- Each IB Gateway is tied to a particular set of login credentials. Each login can be running only one active IB Gateway session at any given time.

- However, an account holder can have multiple logins—at least two logins or possibly more, depending on the account structure. Each login can run its own IB Gateway session. In this way, an account holder can potentially run multiple instances of IB Gateway simultaneously.

- QuantRocket is designed to take advantage of multiple IB Gateways. When running multiple gateways, QuantRocket will spread your market data requests among the connected gateways.

- Since each instance of IB Gateway is rate limited separately by IBKR, the combined data throughput from splitting requests among two IB Gateways is twice that of sending all requests to one IB Gateway.

- Each separate login must separately subscribe to the relevant market data in IBKR Client Portal.

Below are a few common ways to obtain additional logins.

IBKR account structures are complex and vary by subsidiary, local regulations, the person opening the account, etc. The following guidelines are suggestions only and may not be applicable to your situation.

Second user login

Individual account holders can add a second login to their account. This is designed to allow you to use one login for API trading while using the other login to use Trader Workstation for manual trading or account monitoring. However, you can use both logins to collect data with QuantRocket. Note that you can't use the same login to simultaneously run Trader Workstation and collect data with QuantRocket. However, QuantRocket makes it easy to start and stop IB Gateway on a schedule, so the following is an option:

- Login 1 (used for QuantRocket only)

- IB Gateway always running and available for data collection and placing API orders

- Login 2 (used for QuantRocket and Trader Workstation)

- automatically stop IB Gateway daily at 9:30 AM

- Run Trader Workstation during trading session for manual trading/account monitoring

- automatically start IB Gateway daily at 4:00 PM so it can be used for overnight data collection

Advisor/Friends and Family accounts

An advisor account or the similarly structured Friends and Family account offers the possibility to obtain additional logins. Even an individual trader can open a Friends and Family account, in which they serve as their own advisor. The account setup is as follows:

- Master/advisor account: no trading occurs in this account. The account is funded only with enough money to cover market data costs. This yields 1 IB Gateway login.

- Master/advisor second user login: like an individual account, the master account can create a second login, subscribe to market data with this login, and use it for data collection.

- Client account: this is main trading account where the trading funds are deposited. This account receives its own login (for 3 total). By default this account does not having trading permissions, but you can enable client trading permissions via the master account, then subscribe to market data in the client account and begin using the client login to run another instance of IB Gateway. (Note that it's not possible to add a second login for a client account.)

If you have other accounts such as retirement accounts, you can add them as additional client accounts and obtain additional logins.

Paper trading accounts

Each IBKR account holder can enable a paper trading account for simulated trading. You can share market data with your paper account and use the paper account login with QuantRocket to collect data, as well as to paper trade your strategies. You don't need to switch to using your live account until you're ready for live trading (although it's also fine to use your live account login from the start).

Note that, due to restrictions on market data sharing, it's not possible to run IB Gateway using the live account login and corresponding paper account login at the same time. If you try, one of the sessions will disconnect the other session.

IBKR market data permissions

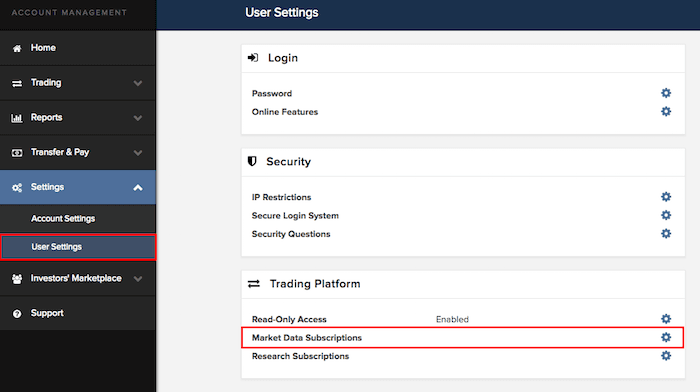

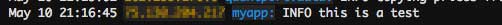

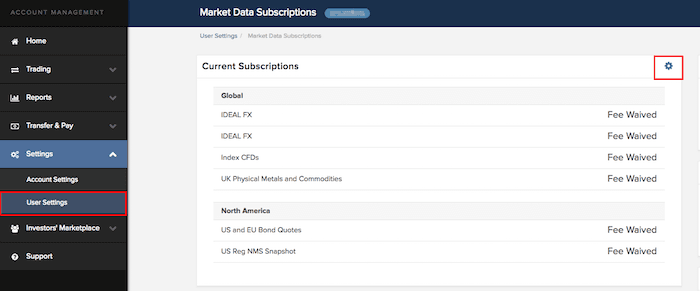

To collect IBKR data using QuantRocket, you must subscribe to the relevant market data in your IBKR account. In IBKR Client Portal, click on Settings > User Settings > Market Data Subscriptions:

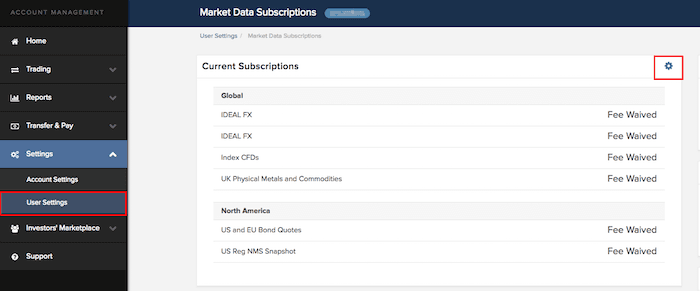

Click the edit icon then select and confirm the relevant subscriptions:

Market data for paper accounts

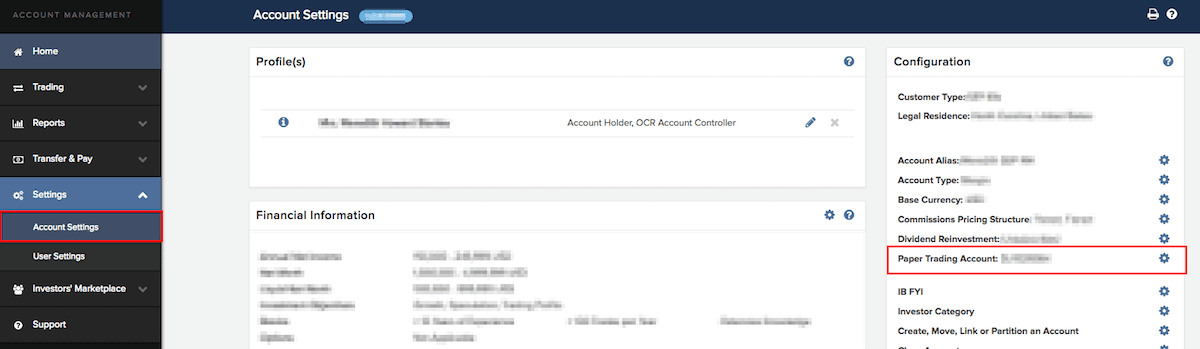

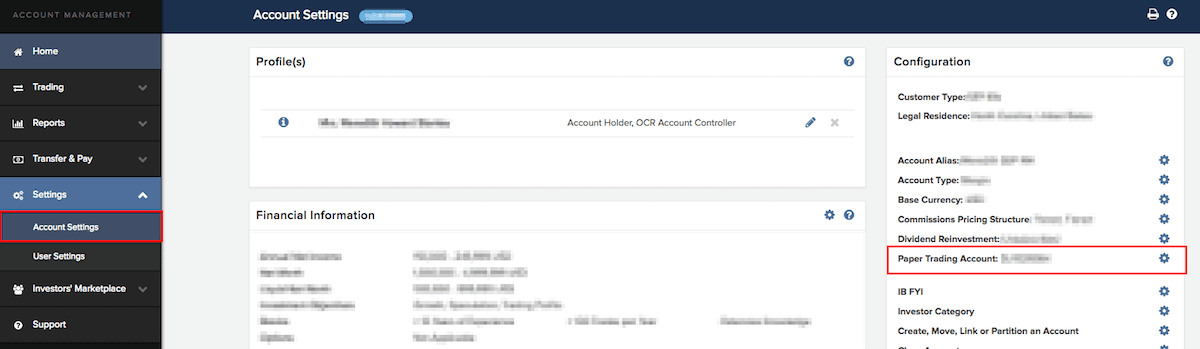

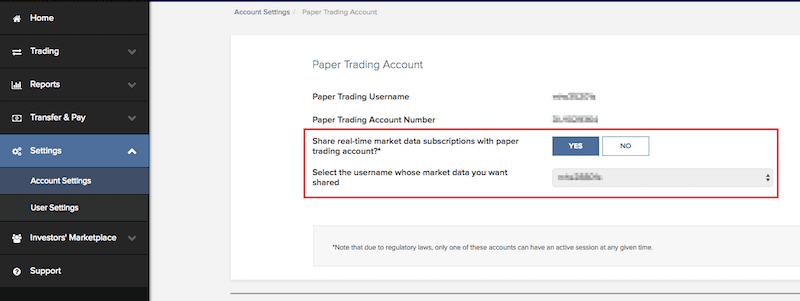

IBKR paper accounts do not directly subscribe to market data. Rather, to access market data using your IBKR paper account, subscribe to the data in your live account and share it with your paper account. Log in to IBKR Client Portal with your live account login and go to Settings > Account Settings > Paper Trading Account:

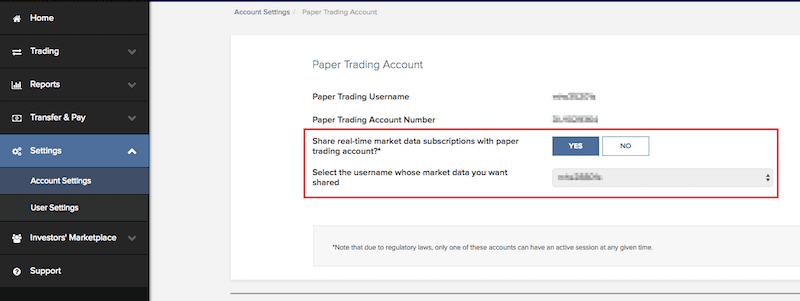

Then select the option to share your live account's market data with your paper account:

IB Gateway

QuantRocket uses the IBKR API to collect market data from IBKR, submit orders, and track positions and account balances. All communication with IBKR is routed through IB Gateway, a Java application which is a slimmed-down version of Trader Workstation (TWS) intended for API use. You can run one or more IB Gateway services through QuantRocket, where each gateway instance is associated with a different IBKR username and password.

Connect to IBKR

Your credentials are encrypted at rest and never leave your deployment.

IB Gateway runs inside the ibg1 container and connects to IBKR using your IBKR username and password. (If you have multiple IBKR usernames, you can run multiple IB Gateways.) The ibgrouter container provides an API that allows you to start and stop IB Gateway inside the ibg container(s).

The steps for connecting to your IBKR account and starting IB Gateway differ depending on whether your IBKR account requires the use of a security card at login.

Secure Login System (SLS)

For fully automated configuration and running of IB Gateway, you must partially opt out of the Secure Login System (SLS), IBKR's two-factor authentication. With a partial opt-out, your username and password (but not your security device) are required for logging into IB Gateway and other IBKR trading platforms. Your security device is still required for logging in to Client Portal. A partial opt-out can be performed in Client Portal by going to Settings > User Settings > Secure Login System > Secure Login Settings.

If you prefer not to perform a partial opt-out of IBKR's Secure Login System (SLS) or can't for regulatory reasons, you can still use QuantRocket but will need to manually enter your security code each time you start IB Gateway using your live login.

A security card is not required for paper accounts, so you can enjoy full automation by using your paper account, even if your live account requires a security card for login.

Enter IBKR login (no security card)

To connect to your IBKR account, enter your IBKR login into your deployment, as well as the desired trading mode (live or paper). You'll be prompted for your password:

$ quantrocket ibg credentials 'ibg1' --username 'myuser' --paper

Enter IBKR Password:

status: successfully set ibg1 credentials

>>> from quantrocket.ibg import set_credentials

>>> set_credentials("ibg1", username="myuser", trading_mode="paper")

Enter IBKR Password:

{'status': 'successfully set ibg1 credentials'}

$ curl -X PUT 'http://houston/ibg1/credentials' -d 'username=myuser' -d 'password=mypassword' -d 'trading_mode=paper'

{"status": "successfully set ibg1 credentials"}

When setting your credentials, QuantRocket performs several steps. It stores your credentials inside your deployment so you don't need to enter them again. It then starts and stops IB Gateway, which causes IB Gateway to download a settings file which QuantRocket then configures appropriately. The entire process takes approximately 30 seconds to complete.

If you encounter errors trying to start IB Gateway, refer to a later section to learn how to

access the IB Gateway GUI for troubleshooting.

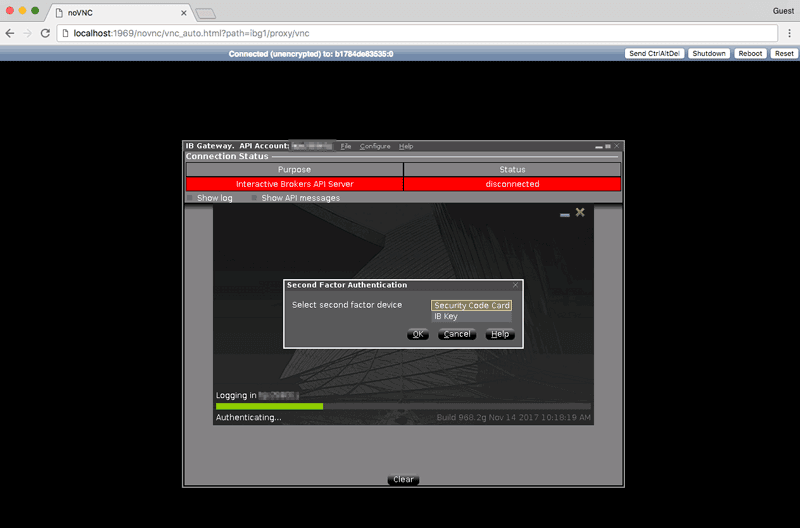

Enter IBKR login (security card required)

To connect to a live IBKR account which requires second factor authentication, enter your IBKR login into your deployment. You'll be prompted for your password:

$ quantrocket ibg credentials 'ibg1' --username 'myuser' --live

Enter IBKR Password:

msg: Cannot start gateway because second factor authentication is required. API settings

not updated. Please open the IB Gateway GUI to complete authentication, then manually

update the API settings. See http://qrok.it/h/ib2fa to learn more

status: error

>>> from quantrocket.ibg import set_credentials

>>> set_credentials("ibg1", username="myuser", trading_mode="live")

Enter IBKR Password:

HTTPError: ('401 Client Error: UNAUTHORIZED for url: http://houston/ibg1/credentials', {'status': 'error', 'msg': 'Cannot start gateway because second factor authentication is required. API settings not updated. Please open the IB Gateway GUI to complete authentication, then manually update the API settings. See http://qrok.it/h/ib2fa to learn more'})

$ curl -X PUT 'http://houston/ibg1/credentials' -d 'username=myuser' -d 'password=mypassword' -d 'trading_mode=live'

{"status": "error", "msg": "Cannot start gateway because second factor authentication is required. API settings not updated. Please open the IB Gateway GUI to complete authentication, then manually update the API settings. See http://qrok.it/h/ib2fa to learn more"}

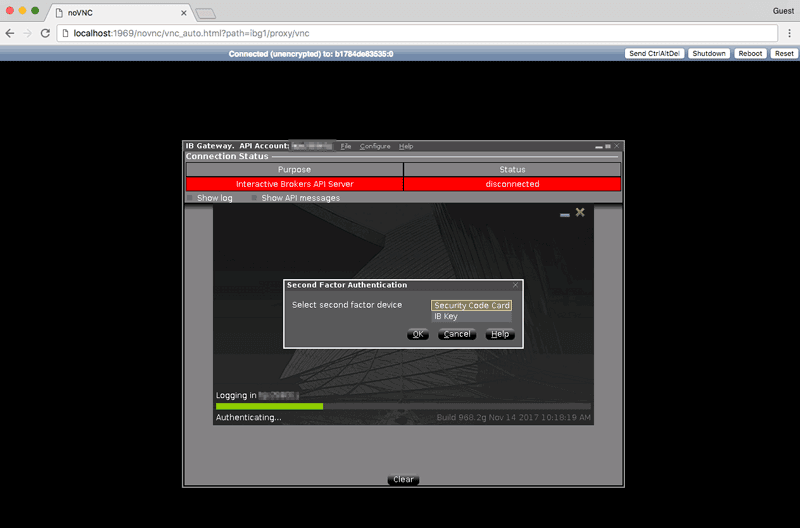

An error message advises you to open the IB Gateway GUI to complete the login. Follow the instructions in a later section to open the GUI, and enter your security code to complete the login.

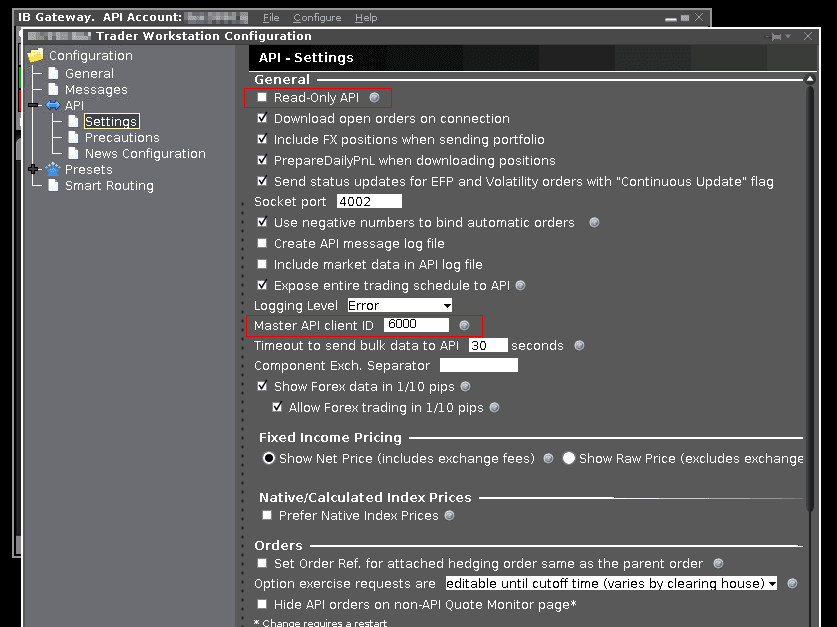

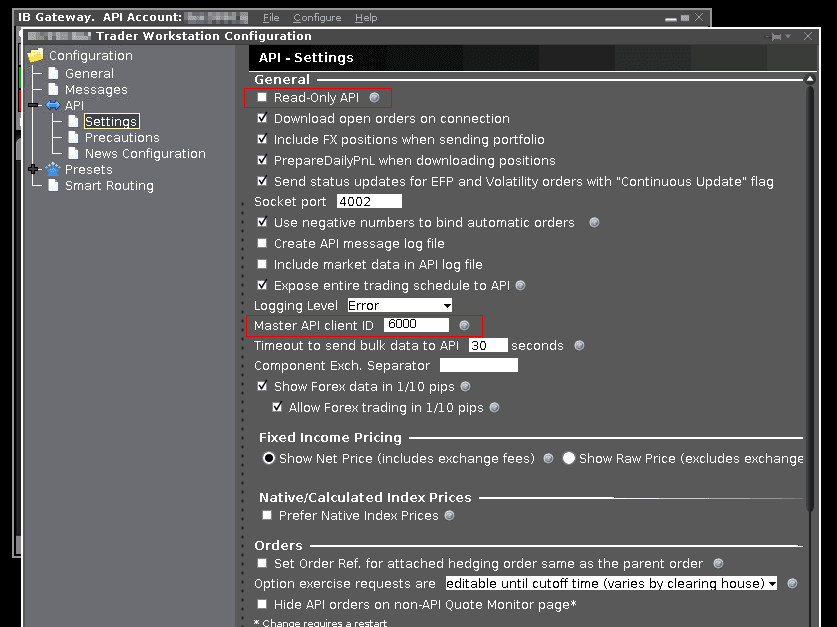

Due to the security card requirement, QuantRocket wasn't able to programatically update IB Gateway settings, so you should update those manually. In the IB Gateway GUI, click Configure > Settings and change the following settings:

- uncheck Read-only API (if you intend to place orders using QuantRocket)

- set Master Client ID to 6000 (if you want QuantRocket to track your trades)

To quit the GUI session but leave IB Gateway running, simply close your browser tab.

Verify IBKR connection

Querying your IBKR account balance is a good way to verify your IBKR connection:

$ quantrocket account balance --latest --fields 'NetLiquidation' | csvlook

| Account | Currency | NetLiquidation | LastUpdated |

| --------- | -------- | -------------- | ------------------- |

| DU12345 | USD | 500,000.00 | 2020-02-02 22:57:13 |

>>> from quantrocket.account import download_account_balances

>>> import io

>>> import pandas as pd

>>> f = io.StringIO()

>>> download_account_balances(f, latest=True, fields=["NetLiquidation"])

>>> balances = pd.read_csv(f, parse_dates=["LastUpdated"])

>>> balances.head()

Account Currency NetLiquidation LastUpdated

0 DU12345 USD 500000.0 2020-02-02 22:57:13

$ curl 'http://houston/account/balances.csv?latest=true&fields=NetLiquidation'

Account,Currency,NetLiquidation,LastUpdated

DU12345,USD,500000.0,2020-02-02 22:57:13

Switch between live and paper account

When you sign up for an IBKR paper account, IBKR provides login credentials for the paper account. However, it is also possible to login to the paper account by using your live account credentials and specifying the trading mode as "paper". Thus, technically the paper login credentials are unnecessary.

Using your live login credentials for both live and paper trading allows you to easily switch back and forth. Supposing you originally select the paper trading mode:

$ quantrocket ibg credentials 'ibg1' --username 'myliveuser' --paper

Enter IBKR Password:

status: successfully set ibg1 credentials

>>> from quantrocket.ibg import set_credentials

>>> set_credentials("ibg1", username="myliveuser", trading_mode="paper")

Enter IBKR Password:

{'status': 'successfully set ibg1 credentials'}

$ curl -X PUT 'http://houston/ibg1/credentials' -d 'username=myliveuser' -d 'password=mypassword' -d 'trading_mode=paper'

{"status": "successfully set ibg1 credentials"}

You can later switch to live trading mode without re-entering your credentials:

$ quantrocket ibg credentials 'ibg1' --live

status: successfully set ibg1 credentials

>>> set_credentials("ibg1", trading_mode="live")

{'status': 'successfully set ibg1 credentials'}

$ curl -X PUT 'http://houston/ibg1/credentials' -d 'trading_mode=live'

{"status": "successfully set ibg1 credentials"}

If you forget which mode you're in (or which login you used), you can check:

$ quantrocket ibg credentials 'ibg1'

TRADING_MODE: live

TWSUSERID: myliveuser

>>> from quantrocket.ibg import get_credentials

>>> get_credentials("ibg1")

{'TWSUSERID': 'myliveuser', 'TRADING_MODE': 'live'}

$ curl -X GET 'http://houston/ibg1/credentials'

{"TWSUSERID": "myliveuser", "TRADING_MODE": "live"}

Start/stop IB Gateway

IB Gateway must be running whenever you want to collect market data or place or monitor orders. You can optionally stop IB Gateway when you're not using it.

To check the current status of your IB Gateway(s):

$ quantrocket ibg status

ibg1: stopped

>>> from quantrocket.ibg import list_gateway_statuses

>>> list_gateway_statuses()

{'ibg1': 'stopped'}

$ curl -X GET 'http://houston/ibgrouter/gateways'

{"ibg1": "stopped"}

You can start IB Gateway, optionally waiting for the startup process to complete:

$ quantrocket ibg start --wait

ibg1:

status: running

>>> from quantrocket.ibg import start_gateways

>>> start_gateways(wait=True)

{'ibg1': {'status': 'running'}}

$ curl -X POST 'http://houston/ibgrouter/gateways?wait=True'

{"ibg1": {"status": "running"}}

And later stop it:

$ quantrocket ibg stop --wait

ibg1:

status: stopped

>>> from quantrocket.ibg import stop_gateways

>>> stop_gateways(wait=True)

{'ibg1': {'status': 'stopped'}}

$ curl -X DELETE 'http://houston/ibgrouter/gateways?wait=True'

{"ibg1": {"status": "stopped"}}

Although IB Gateway is advertised as not having to be restarted once a day like Trader Workstation, it's not unusual for IB Gateway to display unexpected behavior (such as not returning market data when requested) which is then resolved simply by restarting IB Gateway. Therefore you might find it beneficial to restart your gateways from time to time, which you could do via countdown, QuantRocket's cron service:

0 1 * * * quantrocket ibg stop --wait && quantrocket ibg start

Or, perhaps you use one of your IBKR logins during the day to monitor the market using Trader Workstation, but in the evenings you'd like to use this login to add concurrency to your historical data collection. You could start and stop the IB Gateway service in conjunction with the data collection:

30 17 * * 1-5 quantrocket ibg start --wait --gateways 'ibg2' && quantrocket history collect "nasdaq-1d" && quantrocket history wait "nasdaq-1d" && quantrocket ibg stop --gateways 'ibg2'

IB Gateway GUI

Normally you won't need to access the IB Gateway GUI. However, you might need access to troubleshoot a login issue, or if you've enabled two-factor authentication for IB Gateway.

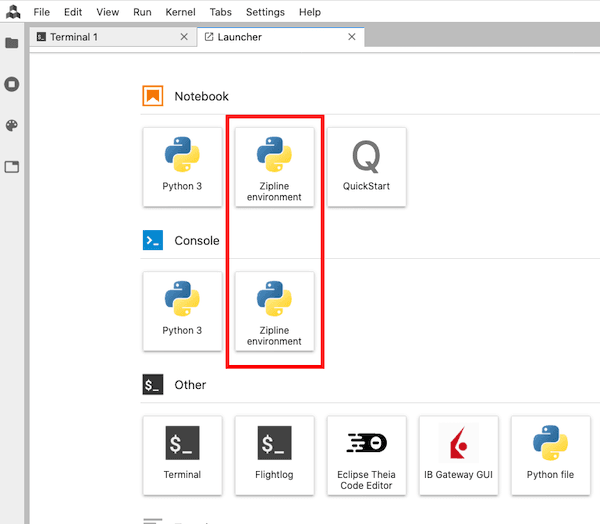

To allow access to the IB Gateway GUI, QuantRocket uses NoVNC, which uses the WebSockets protocol to support VNC connections in the browser. To open an IB Gateway GUI connection in your browser, click the "IB Gateway GUI" button located on the JupyterLab Launcher or from the File menu. The IB Gateway GUI will open in a new window (make sure your browser doesn't block the pop-up).

If IB Gateway isn't currently running, the screen will be black.

To quit the VNC session but leave IB Gateway running, simply close your browser tab.

For improved security for cloud deployments, QuantRocket doesn't directly expose any VNC ports to the outside. By proxying VNC connections through houston using NoVNC, such connections are protected by Basic Auth and SSL, just like every other request sent through houston.

Multiple IB Gateways

QuantRocket support running multiple IB Gateways, each associated with a particular IBKR login. Two of the main reasons for running multiple IB Gateways are:

- To trade multiple accounts

- To increase market data concurrency

The default IB Gateway service is called ibg1. To run multiple IB Gateways, create a file called docker-compose.override.yml in the same directory as your docker-compose.yml and add the desired number of additional services as shown below. In this example we are adding two additional IB Gateway services, ibg2 and ibg3, which inherit from the definition of ibg1:

version: '2.4'

services:

ibg2:

extends:

file: docker-compose.yml

service: ibg1

ibg3:

extends:

file: docker-compose.yml

service: ibg1

You can learn more about docker-compose.override.yml in another section.

Then, deploy the new service(s):

$ cd /path/to/docker-compose.yml

$ docker-compose -p quantrocket up -d

You can then enter your login for each of the new IB Gateways:

$ quantrocket ibg credentials 'ibg2' --username 'myuser' --paper

Enter IBKR Password:

status: successfully set ibg2 credentials

>>> from quantrocket.ibg import set_credentials

>>> set_credentials("ibg2", username="myuser", trading_mode="paper")

Enter IBKR Password:

{'status': 'successfully set ibg2 credentials'}

$ curl -X PUT 'http://houston/ibg2/credentials' -d 'username=myuser' -d 'password=mypassword' -d 'trading_mode=paper'

{"status": "successfully set ibg2 credentials"}

When starting and stopping gateways, the default behavior is start or stop

all gateways. To target specific gateways, use the

gateways parameter:

$ quantrocket ibg start --gateways 'ibg2'

status: the gateways will be started asynchronously

>>> from quantrocket.ibg import start_gateways

>>> start_gateways(gateways=["ibg2"])

{'status': 'the gateways will be started asynchronously'}

$ curl -X POST 'http://houston/ibgrouter/gateways?gateways=ibg2'

{"status": "the gateways will be started asynchronously"}

Market data permission file

Generally, loading your market data permissions into QuantRocket is only necessary when you are running multiple IB Gateway services with different market data permissions for each.

To retrieve market data from IBKR, you must subscribe to the appropriate market data subscriptions in IBKR Client Portal. QuantRocket can't identify your subscriptions via API, so you must tell QuantRocket about your subscriptions by loading a YAML configuration file. If you don't load a configuration file, QuantRocket will assume you have market data permissions for any data you request through QuantRocket. If you only run one IB Gateway service, this is probably sufficient and you can skip the configuration file. However, if you run multiple IB Gateway services with separate market data permissions for each, you will probably want to load a configuration file so QuantRocket can route your requests to the appropriate IB Gateway service. You should also update your configuration file whenever you modify your market data permissions in IBKR Client Portal.

An example IB Gateway permissions template is available from the JupyterLab launcher.

QuantRocket looks for a market data permission file called quantrocket.ibg.permissions.yml in the top-level of the Jupyter file browser (that is, /codeload/quantrocket.ibg.permissions.yml). The format of the YAML file is shown below:

ibg1:

marketdata:

STK:

- NYSE

- ISLAND

- TSEJ

FUT:

- GLOBEX

- OSE

CASH:

- IDEALPRO

research:

- wsh

ibg2:

marketdata:

STK:

- NYSE

When you create or edit this file, QuantRocket will detect the change and load the configuration. It's a good idea to have flightlog open when you do this. If the configuration file is valid, you'll see a success message:

quantrocket.ibgrouter: INFO Successfully loaded /codeload/quantrocket.ibg.permissions.yml

If the configuration file is invalid, you'll see an error message:

quantrocket.ibgrouter: ERROR Could not load /codeload/quantrocket.ibg.permissions.yml:

quantrocket.ibgrouter: ERROR unknown key(s) for service ibg1: marketdata-typo

You can also dump out the currently loaded config to confirm it is as you expect:

$ quantrocket ibg config

ibg1:

marketdata:

CASH:

- IDEALPRO

FUT:

- GLOBEX

- OSE

STK:

- NYSE

- ISLAND

- TSEJ

research:

- reuters

- wsh

ibg2:

marketdata:

STK:

- NYSE

>>> from quantrocket.ibg import get_ibg_config

>>> get_ibg_config()

{

'ibg1': {

'marketdata': {

'CASH': [

'IDEALPRO'

],

'FUT': [

'GLOBEX',

'OSE'

],

'STK': [

'NYSE',

'ISLAND',

'TSEJ'

]

},

'research': [

'reuters',

'wsh'

]

},

'ibg2': {

'marketdata': {

'STK': [

'NYSE'

]

}

}

}

$ curl -X GET 'http://houston/ibgrouter/config'

{

"ibg1": {

"marketdata": {

"CASH": [

"IDEALPRO"

],

"FUT": [

"GLOBEX",

"OSE"

],

"STK": [

"NYSE",

"ISLAND",

"TSEJ"

]

},

"research": [

"reuters",

"wsh"

]

},

"ibg2": {

"marketdata": {

"STK": [

"NYSE"

]

}

}

}

IB Gateway log files

If you need to send your IB Gateway log files to IBKR for troubleshooting, you can use the IB Gateway GUI to export the log files to the Docker filesystem, then copy them to your local filesystem.

- With IB Gateway running, open the GUI.

- In the IB Gateway GUI, click File > Gateway Logs, and select the day you're interested in.

- For small logs, you can view the logs directly in IB Gateway and copy them to your clipboard.

- For larger logs, click Export Logs or Export Today Logs. A file browser will open, showing the filesystem inside the Docker container.

- Export the log file to an easy-to-find location such as

/tmp/ibgateway-exported-logs.txt. - From the host machine, copy the exported logs from the Docker container to your local filesystem. For

ibg1 logs saved to the above location, the command would be:

$ docker cp quantrocket_ibg1_1:/tmp/ibgateway-exported-logs.txt ibgateway-exported-logs.txt

Alpaca

Your credentials are encrypted at rest and never leave your deployment.

Alpaca supports live and paper trading using two separate pairs of API keys and secret keys. Enter each pair of keys to enable the respective type of trading:

$

$ quantrocket license alpaca-key --api-key 'XXXXXXXXXXXXXXXXXX' --live

Enter Alpaca secret key:

status: successfully set Alpaca live API key

$

$ quantrocket license alpaca-key --api-key 'PXXXXXXXXXXXXXXXXXX' --paper

Enter Alpaca secret key:

status: successfully set Alpaca paper API key

>>>

>>> from quantrocket.license import set_alpaca_key

>>> set_alpaca_key(api_key="XXXXXXXXXXXXXXXXXX", trading_mode="live")

Enter Alpaca secret key:

{'status': 'successfully set Alpaca live API key'}

>>>

>>> set_alpaca_key(api_key="PXXXXXXXXXXXXXXXXXX", trading_mode="paper")

Enter Alpaca secret key:

{'status': 'successfully set Alpaca paper API key'}

$

$ curl -X PUT 'http://houston/license-service/credentials/alpaca' -d 'api_key=XXXXXXXXXXXXXXXXXX&secret_key=XXXXXXXXXXXXXXXXXX&trading_mode=live'

{"status": "successfully set Alpaca live API key"}

$

$ curl -X PUT 'http://houston/license-service/credentials/alpaca' -d 'api_key=PXXXXXXXXXXXXXXXXXX&secret_key=XXXXXXXXXXXXXXXXXX&trading_mode=paper'

{"status": "successfully set Alpaca paper API key"}

You can view the currently configured API keys:

$ quantrocket license alpaca-key

live:

api_key: XXXXXXXXXXXXXXXXXX

paper:

api_key: PXXXXXXXXXXXXXXXXXX

>>> from quantrocket.license import get_alpaca_key

>>> get_alpaca_key()

{'live': {'api_key': 'XXXXXXXXXXXXXXXXXX'},

'paper': {'api_key': 'PXXXXXXXXXXXXXXXXXX'}}

$ curl -X GET 'http://houston/license-service/credentials/alpaca'

{"live": {"api_key": "XXXXXXXXXXXXXXXXXX"}, "paper": {"api_key": "PXXXXXXXXXXXXXXXXXX"}}

If you have access to Polygon.io data through Alpaca and wish to access Polygon.io data in QuantRocket, you should additionally enter your Alpaca key as your Polygon API key, as described below.

Polygon.io

Your credentials are encrypted at rest and never leave your deployment.

To enable access to Polygon.io data, enter your Polygon.io API key (or Alpaca API key, for users with Polygon.io access through Alpaca):

$ quantrocket license polygon-key 'XXXXXXXXXXXXXXXXXX'

status: successfully set Polygon API key

>>> from quantrocket.license import set_polygon_key

>>> set_polygon_key(api_key="XXXXXXXXXXXXXXXXXX")

{'status': 'successfully set Polygon API key'}

$ curl -X PUT 'http://houston/license-service/credentials/polygon' -d 'api_key=XXXXXXXXXXXXXXXXXX'

{"status": "successfully set Polygon API key"}

You can view the currently configured API key:

$ quantrocket license polygon-key

api_key: XXXXXXXXXXXXXXXXXX

>>> from quantrocket.license import get_polygon_key

>>> get_polygon_key()

{'api_key': 'XXXXXXXXXXXXXXXXXX'}

curl -X GET 'http://houston/license-service/credentials/polygon'

{"api_key": "XXXXXXXXXXXXXXXXXX"}

Quandl

Your credentials are encrypted at rest and never leave your deployment.

Professional users who subscribe to Sharadar data through Quandl can access Sharadar data in QuantRocket. To enable access, enter your Quandl API key:

$ quantrocket license quandl-key 'XXXXXXXXXXXXXXXXXX'

status: successfully set Quandl API key

>>> from quantrocket.license import set_quandl_key

>>> set_quandl_key(api_key="XXXXXXXXXXXXXXXXXX")

{'status': 'successfully set Quandl API key'}

$ curl -X PUT 'http://houston/license-service/credentials/quandl' -d 'api_key=XXXXXXXXXXXXXXXXXX'

{"status": "successfully set Quandl API key"}

You can view the currently configured API key:

$ quantrocket license quandl-key

api_key: XXXXXXXXXXXXXXXXXX

>>> from quantrocket.license import get_quandl_key

>>> get_quandl_key()

{'api_key': 'XXXXXXXXXXXXXXXXXX'}

curl -X GET 'http://houston/license-service/credentials/quandl'

{"api_key": "XXXXXXXXXXXXXXXXXX"}

IDEs and Editors

QuantRocket allows you to work in several different IDEs (integrated development environments) and editors.

Comparison

A summary comparison of the availables IDEs and editors is shown below:

| JupyterLab | Eclipse Theia | VS Code |

|---|

| ideal for | interactive research | code editing from any computer | desktop code editing |

| runs in | browser | browser | desktop |

| supports Jupyter Notebooks? | yes | no | experimental |

| supports Terminals? | yes | no | yes |

| setup required? | no | no | yes |

JupyterLab is the primary user interface for QuantRocket. It is an ideal environment for interactive research. You can access QuantRocket's Python API through JupyterLab Consoles and Notebooks, and you can access QuantRocket's command line interface (CLI) through JupyterLab Terminals.

A limitation of JupyterLab is that its text editor is very basic, providing syntax highlighting but not much more. For a better code editing experience, you can use Eclipse Theia or Visual Studio Code.

Eclipse Theia and VS Code have similar user interfaces, so what are the differences? Eclipse Theia runs in the browser and requires no setup; thus you can edit your code from any computer. Theia provides syntax highlighting, auto-completion, linting, and a Git integration. Other features such as terminals are disabled.

VS Code runs on your desktop and requires some basic setup, but offers a fuller-featured editing experience. We suggest using VS Code on your main workstation and using Eclipse Theia when on-the-go.

JupyterLab

See the QuickStart for a hands-on overview of JupyterLab.

A recommended workflow for Moonshot strategies and custom scripts is to develop your code interactively in a Jupyter notebook then transfer it to a .py file.

Eclipse Theia

Access Eclipse Theia from the JupyterLab launcher:

Visual Studio Code

You can install Visual Studio Code on your desktop and attach it to your local or cloud deployment. This allows you to edit code and open terminals from within VS Code. VS Code utilizes the environment provided by the QuantRocket container you attach to, so autocomplete and other features are based on the QuantRocket environment, meaning there's no need to manually replicate QuantRocket's environment on your local computer.

Follow these steps to use VS Code with QuantRocket.

- First, download and install VS Code for your operating system.

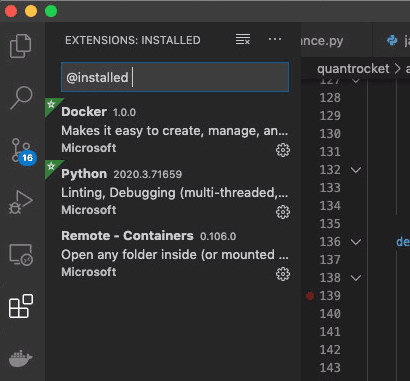

- In VS Code, open the extension manager and install the following extensions:

- Python

- Docker

- Remote - Containers

- For cloud deployments only: By default, VS Code will be able to see any Docker containers running on your local machine. To make VS Code see your QuantRocket containers running remotely in the cloud, follow these steps:

- Open the command palette (View > Command Palette) and search for and run the command called:

Shell Command: Install 'code' command in PATH. This makes it possible to launch VS Code from a Terminal or Powershell by typing code. - Completely close VS Code.

- In a Terminal or Powershell, run

docker-machine env quantrocket and run the provided command output, just as you would to deploy QuantRocket. This command sets environment variables which point Docker to the remote host where you are running QuantRocket. - From the same Terminal or Powershell window, type

code to launch VS Code. This causes VS Code to inherit the environment of the terminal from which you launched it, which enables VS Code to see the containers running remotely. - Each time you launch VS Code in the future, you must launch it from a terminal as described in steps 3 and 4.

- Open the Docker panel in the side bar, find the jupyter container, right-click, and choose "Attach Visual Studio Code". A new window opens.

- (The original VS Code window still points to your local computer and can be used to edit your local projects.)

- The new VS Code window that opened is attached to the jupyter container. VS code will automatically install itself on the jupyter container.

- Any extensions you may have installed on your local VS Code are not automatically installed on the remote VS Code, so you should install them. Open the Extensions Manager and install, at minimum, the Python extension, and anything else you like. VS Code remembers what you install in a local configuration file and restores your desired environment in the future even if you destroy and re-create the container.

- In the Explorer window, click Open Folder, type 'codeload', then Open Folder. The files on your jupyter container will now be displayed in the VS Code file browser.

Jupyter notebooks in VS Code

Support for running Jupyter notebooks in VS Code is experimental. If you encounter problems starting notebooks in VS Code, please use JupyterLab instead.

If you wish to use Jupyter notebooks in VS Code, follow these steps:

- Open the command palette (View > Command Palette) and search for and select the command called:

Python: Specify Local or Remote Jupyter Server for connections. - On the next menu, select

Existing: Specify the URI of an existing server. - Enter the following URL:

http://localhost/jupyter (this applies both to local and cloud deployments) - Reload the VS Code window if prompted to do so.

- Open an existing Jupyter notebook. (Creating notebooks from within VS Code may or may not work.)

- The first time you execute a cell, VS Code will prompt for a password. Simply hit enter. (No password is needed as you are already inside jupyter and simply connecting to localhost.)

Terminal utilities

.bashrc

You can customize your JupyterLab Terminals by creating a .bashrc file and storing it at /codeload/.bashrc. This file will be run when you open a new terminal, just like on a standard Linux distribution.

An example use is to create aliases for commonly typed commands. For example, placing the following alias in your /codeload/.bashrc file will allow you to check your balance by simply typing balance:

alias balance="quantrocket account balance -l -f NetLiquidation | csvlook"

After adding or editing a .bashrc file, you must open new Terminals for the changes to take effect.

csvkit

Many QuantRocket API endpoints return CSV files. csvkit is a suite of utilities that makes it easier to work with CSV files from the command line. To make a CSV file more easily readable, use csvlook:

$ quantrocket master get --exchanges 'XNAS' 'XNYS' | csvlook -I

| Sid | Symbol | Exchange | Country | Currency | SecType | Etf | Timezone | Name |

| -------------- | ------ | -------- | ------- | -------- | ------- | --- | ------------------- | -------------------------- |

| FIBBG000B9XRY4 | AAPL | XNAS | US | USD | STK | 0 | America/New_York | APPLE INC |

| FIBBG000BFWKC0 | MON | XNYS | US | USD | STK | 0 | America/New_York | MONSANTO CO |

| FIBBG000BKZB36 | HD | XNYS | US | USD | STK | 0 | America/New_York | HOME DEPOT INC |

| FIBBG000BMHYD1 | JNJ | XNYS | US | USD | STK | 0 | America/New_York | JOHNSON & JOHNSON |

Another useful utility is csvgrep, which can be used to filter CSV files on fields not natively filterable by QuantRocket's API:

$

$ quantrocket master get --exchanges 'XNYS' --fields 'usstock_SecurityType2' | csvgrep --columns 'usstock_SecurityType2' --match 'Depositary Receipt' > nyse_adrs.csv

json2yml

For records which are too wide for the Terminal viewing area in CSV format, a convenient option is to request JSON and convert it to YAML using the json2yml utility:

$ quantrocket master get --symbols 'AAPL' --json | json2yml

-

Sid: "FIBBG000B9XRY4"

Symbol: "AAPL"

Exchange: "XNAS"

Country: "US"

Currency: "USD"

SecType: "STK"

Etf: 0

Timezone: "America/New_York"

Name: "APPLE INC"

PriceMagnifier: 1

Multiplier: 1

Delisted: 0

DateDelisted: null

LastTradeDate: null

RolloverDate: null

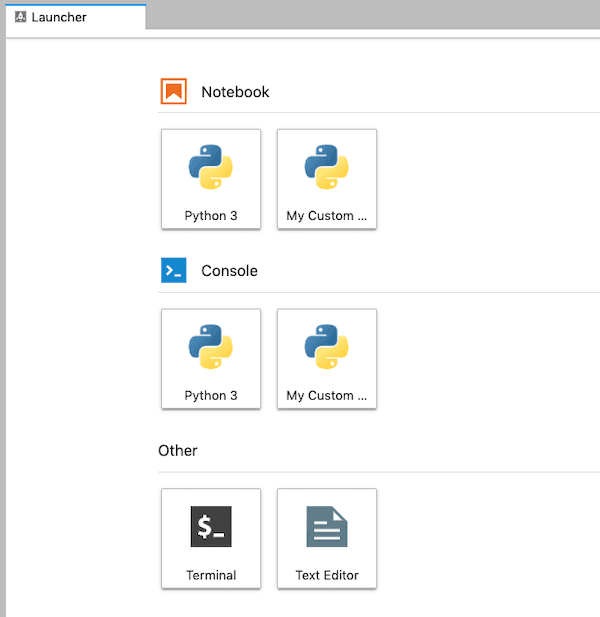

Custom JupyterLab environments

Follow these steps to create a custom conda environment and make it available as a custom kernel from the JupyterLab launcher.

This is an advanced topic. Most users will not need to do this.

Keep in mind that QuantRocket has a distributed architecture and these steps will only create the custom environment within the jupyter container, not in other containers where user code may run, such as the moonshot, zipline, and satellite containers.

First-time install

First, in a JupyterLab terminal, initialize your bash shell then exit the terminal:

$ conda init 'bash'

$ exit

Open a new JupyterLab terminal, then clone the base environment and activate your new environment:

$ conda create --name 'myclone' --clone 'base'

$ conda activate 'myclone'

Install new packages to customize your conda environment. For easier repeatability, list your packages in a text file in the /codeload directory and install the packages from file. One of the packages should be ipykernel:

$ (myclone) $ echo 'ipykernel' > /codeload/quantrocket.jupyter.conda.myclone.txt

$ (myclone) $

$ (myclone) $ conda install --file '/codeload/quantrocket.jupyter.conda.myclone.txt'

Next, create a new kernel spec associated with your custom conda environment. For easier repeatability, create the kernel spec under the /codeload directory instead of directly in the default location:

$ (myclone) $

$ (myclone) $ ipython kernel install --name 'mykernel' --display-name 'My Custom Kernel' --prefix '/codeload/kernels'

Install the kernel. This command copies the kernel spec to a location where JupyterLab looks:

$ (myclone) $ jupyter kernelspec install '/codeload/kernels/share/jupyter/kernels/mykernel'

Finally, to activate the change, open Terminal (MacOS/Linux) or PowerShell (Windows) and restart the jupyter container:

$ docker-compose restart jupyter

The new kernel will appear in the Launcher menu:

Re-install after container redeploy

Whenever you redeploy the jupyter container (either due to updating the container version or force recreating the container), the filesystem is replaced and thus your custom conda environment and JupyterLab kernel will be lost. The re-install process can omit a few steps because you saved the conda package file and kernel spec to your /codeload directory. The simplified process is as follows. Initialize your shell:

$ conda init 'bash'

$ exit

Reopen a terminal, then:

$

$ conda create --name 'myclone' --clone 'base'

$ conda activate 'myclone'

$ (myclone) $

$ (myclone) $ conda install --file '/codeload/quantrocket.jupyter.conda.myclone.txt'

$ (myclone) $

$ (myclone) $ jupyter kernelspec install '/codeload/kernels/share/jupyter/kernels/mykernel'

Then, restart the jupyter container to activate the change:

$ docker-compose restart jupyter

Securities Master

The securities master is the central repository of available assets. With QuantRocket's securities master, you can:

- Collect lists of all available securities from multiple data providers;

- Query reference data about securities, such as ticker symbol, currency, exchange, sector, expiration date (in the case of derivatives), and so on;

- Flexibly group securities into universes that make sense for your research or trading strategies.

QuantRocket assigns each security a unique ID known as its "Sid" (short for "security ID"). Sids allow securities to be uniquely and consistently referenced over time regardless of ticker changes or ticker symbol inconsistencies between vendors. Sids make it possible to mix-and-match data from different providers. QuantRocket Sids are primarily based on Bloomberg-sponsored OpenFIGI identifiers.

All components of the software, from historical and fundamental data collection to order and execution tracking, utilize Sids and thus depend on the securities master.

Collect listings

Generally, the first step before utilizing any dataset or sending orders to any broker is to collect the list of available securities for that provider.

Note on terminology: In QuantRocket, "collecting" data means retrieving it from a third-party and storing it in a local database. Once data has been collected, you can "download" it, which means to query the stored data from your local database for use in your analysis or trading strategies.

Because QuantRocket supports multiple data vendors and brokers, you may collect the same listing (for example AAPL stock) from multiple providers. QuantRocket will consolidate the overlapping records into a single, combined record, as explained in more detail below.

Alpaca

Alpaca customers should collect Alpaca's list of available securities before they begin live or paper trading:

$ quantrocket master collect-alpaca

msg: successfully loaded alpaca securities

status: success

>>> from quantrocket.master import collect_alpaca_listings

>>> collect_alpaca_listings()

{'status': 'success', 'msg': 'successfully loaded alpaca securities'}

$ curl -X POST 'http://houston/master/securities/alpaca'

{"status": "success", "msg": "successfully loaded alpaca securities"}

An example Alpaca record for AAPL is shown below:

Sid: "FIBBG000B9XRY4"

alpaca_AssetClass: "us_equity"

alpaca_AssetId: "b0b6dd9d-8b9b-48a9-ba46-b9d54906e415"

alpaca_EasyToBorrow: 1

alpaca_Exchange: "NASDAQ"

alpaca_Marginable: 1

alpaca_Name: null

alpaca_Shortable: 1

alpaca_Status: "active"

alpaca_Symbol: "AAPL"

alpaca_Tradable: 1

EDI

EDI listings are automatically collected when you collect EDI historical data, but they can also be collected separately. Specify one or MICs (market identifier codes):

$ quantrocket master collect-edi --exchanges 'XSHG' 'XSHE'

exchanges:

XSHE: successfully loaded XSHE securities

XSHG: successfully loaded XSHG securities

status: success

>>> from quantrocket.master import collect_edi_listings

>>> collect_edi_listings(exchanges=["XSHG", "XSHE"])

{'status': 'success',

'exchanges': {'XSHG': 'successfully loaded XSHG securities', 'XSHE': 'successfully loaded XSHE securities'}}

$ curl -X POST 'http://houston/master/securities/edi?exchanges=XSHG&exchanges=XSHE'

{"status": "success", "exchanges": {"XSHG": "successfully loaded XSHG securities", "XSHE": "successfully loaded XSHE securities"}}

For sample data, use the MIC code FREE.

An example EDI record for AAPL is shown below:

Sid: "FIBBG000B9XRY4"

edi_Cik: 320193

edi_CountryInc: "United States of America"

edi_CountryListed: "United States of America"

edi_Currency: "USD"

edi_DateDelisted: null

edi_ExchangeListingStatus: "Listed"

edi_FirstPriceDate: "2007-01-03"

edi_GlobalListingStatus: "Active"

edi_Industry: "Information Technology"

edi_IsPrimaryListing: 1

edi_IsoCountryInc: "US"

edi_IsoCountryListed: "US"

edi_IssuerId: 30017

edi_IssuerName: "Apple Inc"

edi_LastPriceDate: null

edi_LocalSymbol: "AAPL"

edi_Mic: "XNAS"

edi_MicSegment: "XNGS"

edi_MicTimezone: "America/New_York"

edi_PreferredName: "Apple Inc"

edi_PrimaryMic: "XNAS"

edi_RecordCreated: "2001-05-05"

edi_RecordModified: "2020-02-10 13:17:27"

edi_SecId: 33449

edi_SecTypeCode: "EQS"

edi_SecTypeDesc: "Equity Shares"

edi_SecurityDesc: "Ordinary Shares"

edi_Sic: "Electronic Computers"

edi_SicCode: 3571

edi_SicDivision: "Manufacturing"

edi_SicIndustryGroup: "Computer And Office Equipment"

edi_SicMajorGroup: "Industrial And Commercial Machinery And Computer Equipment"

edi_StructureCode: null

edi_StructureDesc: null

Figi

QuantRocket Sids are based on FIGI identifiers. While the OpenFIGI API is primarily a way to map securities to FIGI identifiers, it also provides several useful security attributes including market sector, a detailed security type, and share class-level FIGI identifiers. You can collect FIGI fields for all available QuantRocket securities:

$ quantrocket master collect-figi

msg: successfully loaded FIGIs

status: success

>>> from quantrocket.master import collect_figi_listings

>>> collect_figi_listings()

{'status': 'success', 'msg': 'successfully loaded FIGIs'}

$ curl -X POST 'http://houston/master/securities/figi'

{"status": "success", "msg": "successfully loaded FIGIs"}

An example FIGI record for AAPL is shown below:

Sid: "FIBBG000B9XRY4"

figi_CompositeFigi: "BBG000B9XRY4"

figi_ExchCode: "US"

figi_Figi: "BBG000B9XRY4"

figi_IsComposite: 1

figi_MarketSector: "Equity"

figi_Name: "APPLE INC"

figi_SecurityDescription: "AAPL"

figi_SecurityType: "Common Stock"

figi_SecurityType2: "Common Stock"

figi_ShareClassFigi: "BBG001S5N8V8"

figi_Ticker: "AAPL"

figi_UniqueId: "EQ0010169500001000"

figi_UniqueIdFutOpt: null

Interactive Brokers

Interactive Brokers can be utilized both as a data provider and a broker. First, decide which exchange(s) you want to work with. You can view exchange listings on the IBKR website or use QuantRocket to summarize the IBKR website by security type:

$ quantrocket master list-ibkr-exchanges --regions 'asia' --sec-types 'STK'

STK:

Australia:

- ASX

- CHIXAU

Hong Kong:

- SEHK

- SEHKNTL

- SEHKSZSE

India:

- NSE

Japan:

- CHIXJ

- JPNNEXT

- TSEJ

Singapore:

- SGX

>>> from quantrocket.master import list_ibkr_exchanges

>>> list_ibkr_exchanges(regions=["asia"], sec_types=["STK"])

{'STK': {'Australia': ['ASX', 'CHIXAU'],

'Hong Kong': ['SEHK', 'SEHKNTL', 'SEHKSZSE'],

'India': ['NSE'],

'Japan': ['CHIXJ', 'JPNNEXT', 'TSEJ'],

'Singapore': ['SGX']}}

$ curl 'http://houston/master/exchanges/ibkr?sec_types=STK®ions=asia'

{"STK": {"Australia": ["ASX", "CHIXAU"], "Hong Kong": ["SEHK", "SEHKNTL", "SEHKSZSE"], "India": ["NSE"], "Japan": ["CHIXJ", "JPNNEXT", "TSEJ"], "Singapore": ["SGX"]}}

Specify the IBKR exchange code (not the MIC) to collect all listings on the exchange, optionally filtering by security type, symbol, or currency. For example, this would collect all stock listings on the Hong Kong Stock Exchange:

$ quantrocket master collect-ibkr --exchanges 'SEHK' --sec-types 'STK'

status: the IBKR listing details will be collected asynchronously

>>> from quantrocket.master import collect_ibkr_listings

>>> collect_ibkr_listings(exchanges="SEHK", sec_types=["STK"])

{'status': 'the IBKR listing details will be collected asynchronously'}

$ curl -X POST 'http://houston/master/securities/ibkr?exchanges=SEHK&sec_types=STK'

{"status": "the IBKR listing details will be collected asynchronously"}

$ quantrocket flightlog stream --hist 5

quantrocket.master: INFO Collecting SEHK STK listings from IBKR website

quantrocket.master: INFO Requesting details for 2630 SEHK listings found on IBKR website

quantrocket.master: INFO Saved 2630 SEHK listings to securities master database

The number of listings collected from the IBKR website might be larger than the number of listings actually saved to the database. This is because the IBKR website lists all symbols that trade on a given exchange, even if the exchange is not the primary listing exchange. For example, the primary listing exchange for Alcoa (AA) is NYSE, but the IBKR website also lists Alcoa under the BATS exchange because Alcoa also trades on BATS (and many other US exchanges). QuantRocket saves Alcoa's contract details when you collect NYSE listings, not when you collect BATS listings. For futures, the number of contracts saved to the database will typically be larger than the number of listings found on the IBKR website because the website only lists underlyings but QuantRocket saves all available expiries for each underlying.

For free sample data, specify the exchange code FREE.

An example IBKR record for AAPL is shown below:

Sid: "FIBBG000B9XRY4"

ibkr_AggGroup: 1

ibkr_Category: "Computers"

ibkr_ComboLegs: null

ibkr_ConId: 265598

ibkr_ContractMonth: null

ibkr_Currency: "USD"

ibkr_Cusip: null

ibkr_DateDelisted: null

ibkr_Delisted: 0

ibkr_Etf: 0

ibkr_EvMultiplier: 0

ibkr_EvRule: null

ibkr_Industry: "Computers"

ibkr_Isin: "US0378331005"

ibkr_LastTradeDate: null

ibkr_LocalSymbol: "AAPL"

ibkr_LongName: "APPLE INC"

ibkr_MarketName: "NMS"

ibkr_MarketRuleIds: "26,26,26,26,26,26,26,26,26,26,26,26,26,26,26,26,26,26,26,26,26,26"

ibkr_MdSizeMultiplier: 100

ibkr_MinTick: 0.01

ibkr_Multiplier: null

ibkr_PriceMagnifier: 1

ibkr_PrimaryExchange: "NASDAQ"

ibkr_RealExpirationDate: null

ibkr_Right: null

ibkr_SecType: "STK"

ibkr_Sector: "Technology"

ibkr_Strike: 0

ibkr_Symbol: "AAPL"

ibkr_Timezone: "America/New_York"

ibkr_TradingClass: "NMS"

ibkr_UnderConId: 0

ibkr_UnderSecType: null

ibkr_UnderSymbol: null

ibkr_ValidExchanges: "SMART,AMEX,NYSE,CBOE,PHLX,ISE,CHX,ARCA,ISLAND,DRCTEDGE,BEX,BATS,EDGEA,CSFBALGO,JEFFALGO,BYX,IEX,EDGX,FOXRIVER,TPLUS1,NYSENAT,PSX"

Option chains

To collect option chains from Interactive Brokers, first collect listings for the underlying securities:

$ quantrocket master collect-ibkr --exchanges 'NASDAQ' --sec-types 'STK' --symbols 'GOOG' 'FB' 'AAPL'

status: the IBKR listing details will be collected asynchronously

>>> from quantrocket.master import collect_ibkr_listings

>>> collect_ibkr_listings(exchanges="NASDAQ", sec_types=["STK"], symbols=["GOOG", "FB", "AAPL"])

{'status': 'the IBKR listing details will be collected asynchronously'}

$ curl -X POST 'http://houston/master/securities/ibkr?exchanges=NASDAQ&sec_types=STK&symbols=GOOG&symbols=FB&symbols=AAPL'

{"status": "the IBKR listing details will be collected asynchronously"}

Then request option chains by specifying the sids of the underlying stocks. In this example, we download a file of the underlying stocks and pass it as an infile to the options collection endpoint:

$ quantrocket master get -e 'NASDAQ' -t 'STK' -s 'GOOG' 'FB' 'AAPL' | quantrocket master collect-ibkr-options --infile -

status: the IBKR option chains will be collected asynchronously

>>> from quantrocket.master import download_master_file, collect_ibkr_option_chains

>>> import io

>>> f = io.StringIO()

>>> download_master_file(f, exchanges=["NASDAQ"], sec_types=["STK"], symbols=["GOOG", "FB", "AAPL"])

>>> collect_ibkr_option_chains(infilepath_or_buffer=f)

{'status': 'the IBKR option chains will be collected asynchronously'}

$ curl -X GET 'http://houston/master/securities.csv?exchanges=NASDAQ&sec_types=STK&symbols=GOOG&symbols=FB&symbols=AAPL' > nasdaq_mega.csv

$ curl -X POST 'http://houston/master/options/ibkr' --upload-file nasdaq_mega.csv

{"status": "the IBKR option chains will be collected asynchronously"}

Once the options collection has finished, you can query the options like any other security:

$ quantrocket master get -s 'GOOG' 'FB' 'AAPL' -t 'OPT' --outfile 'options.csv'

>>> from quantrocket.master import download_master_file

>>> download_master_file("options.csv", symbols=["GOOG", "FB", "AAPL"], sec_types=["OPT"])

$ curl -X GET 'http://houston/master/securities.csv?symbols=GOOG&symbols=FB&symbols=AAPL&sec_types=OPT' > options.csv

Option chains often consist of hundreds, sometimes thousands of options per underlying security. Requesting option chains for large universes of underlying securities, such as all stocks on the NYSE, can take numerous hours to complete.

Sharadar

Sharadar listings are automatically collected when you collect Sharadar fundamental or price data, but they can also be collected separately. Specify the country (US):

$ quantrocket master collect-sharadar --countries 'US'

countries:

US: successfully loaded US securities

status: success

>>> from quantrocket.master import collect_sharadar_listings

>>> collect_sharadar_listings(countries="US")

>>> {'status': 'success', 'countries': {'US': 'successfully loaded US securities'}}

$ curl -X POST 'http://houston/master/securities/sharadar?countries=US'

{"status": "success", "countries": {"US": "successfully loaded US securities"}}

For sample data, use the country code FREE.

An example Sharadar record for AAPL is shown below:

Sid: "FIBBG000B9XRY4"

sharadar_Category: "Domestic"

sharadar_CompanySite: "http://www.apple.com"

sharadar_CountryListed: "US"

sharadar_Currency: "USD"

sharadar_Cusips: 37833100

sharadar_DateDelisted: null

sharadar_Delisted: 0

sharadar_Exchange: "NASDAQ"

sharadar_FamaIndustry: "Computers"

sharadar_FamaSector: null

sharadar_FirstAdded: "2014-09-24"

sharadar_FirstPriceDate: "1986-01-01"

sharadar_FirstQuarter: "1996-09-30"

sharadar_Industry: "Consumer Electronics"

sharadar_LastPriceDate: null

sharadar_LastQuarter: "2020-06-30"

sharadar_LastUpdated: "2020-07-03"

sharadar_Location: "California; U.S.A"

sharadar_Name: "Apple Inc"

sharadar_Permaticker: 199059

sharadar_RelatedTickers: null

sharadar_ScaleMarketCap: "6 - Mega"

sharadar_ScaleRevenue: "6 - Mega"

sharadar_SecFilings: "https://www.sec.gov/cgi-bin/browse-edgar?action=getcompany&CIK=0000320193"

sharadar_Sector: "Technology"

sharadar_SicCode: 3571

sharadar_SicIndustry: "Electronic Computers"

sharadar_SicSector: "Manufacturing"

sharadar_Ticker: "AAPL"

US Stock

All plans include access to historical intraday and end-of-day US stock prices. US stock listings are automatically collected when you collect the price data, but they can also be collected separately.

$ quantrocket master collect-usstock

msg: successfully loaded US stock listings

status: success

>>> from quantrocket.master import collect_usstock_listings

>>> collect_usstock_listings()

{'status': 'success', 'msg': 'successfully loaded US stock listings'}

$ curl -X POST 'http://houston/master/securities/usstock'

{"status": "success", "msg": "successfully loaded US stock listings"}

An example US stock record for AAPL is shown below:

Sid: "FIBBG000B9XRY4"

usstock_DateDelisted: null

usstock_FirstPriceDate: "2007-01-03"

usstock_LastPriceDate: null

usstock_Mic: "XNAS"

usstock_Name: "APPLE INC"

usstock_SecurityType: "Common Stock"

usstock_SecurityType2: "Common Stock"

usstock_Sic: "Electronic Computers"

usstock_SicCode: 3571

usstock_SicDivision: "Manufacturing"

usstock_SicIndustryGroup: "Computer And Office Equipment"

usstock_SicMajorGroup: "Industrial And Commercial Machinery And Computer Equipment"

usstock_Symbol: "AAPL"

Master file

After you collect listings, you can download and inspect the master file, querying by symbol, exchange, currency, sid, or universe. When querying by exchange, you can use the MIC as in the following example (preferred), or the vendor-specific exchange code:

$ quantrocket master get --exchanges 'XNAS' 'XNYS' -o listings.csv

$ csvlook listings.csv

| Sid | Symbol | Exchange | Country | Currency | SecType | Etf | Timezone | Name |

| -------------- | ------ | -------- | ------- | -------- | ------- | --- | ------------------- | -------------------------- |

| FIBBG000B9XRY4 | AAPL | XNAS | US | USD | STK | 0 | America/New_York | APPLE INC |

| FIBBG000BFWKC0 | MON | XNYS | US | USD | STK | 0 | America/New_York | MONSANTO CO |

| FIBBG000BKZB36 | HD | XNYS | US | USD | STK | 0 | America/New_York | HOME DEPOT INC |

| FIBBG000BMHYD1 | JNJ | XNYS | US | USD | STK | 0 | America/New_York | JOHNSON & JOHNSON |

| FIBBG000BPH459 | MSFT | XNAS | US | USD | STK | 0 | America/New_York | MICROSOFT CORP |

>>> import pandas as pd

>>> from quantrocket.master import download_master_file

>>> download_master_file("listings.csv", exchanges=["XNYS", "XNAS"])

>>> securities = pd.read_csv("listings.csv")

>>> securities.head()

Sid Symbol Exchange Country Currency SecType Etf Timezone Name

0 FIBBG000B9XRY4 AAPL XNAS US USD STK 0 America/New_York APPLE INC

1 FIBBG000BFWKC0 MON XNYS US USD STK 0 America/New_York MONSANTO CO

2 FIBBG000BKZB36 HD XNYS US USD STK 0 America/New_York HOME DEPOT INC

3 FIBBG000BMHYD1 JNJ XNYS US USD STK 0 America/New_York JOHNSON & JOHNSON

4 FIBBG000BPH459 MSFT XNAS US USD STK 0 America/New_York MICROSOFT CORP

$ curl -X GET 'http://houston/master/securities.csv?exchanges=XNYS&exchanges=XNAS' > listings.csv

$ head listings.csv

Sid,Symbol,Exchange,Country,Currency,SecType,Etf,Timezone,Name

FIBBG000B9XRY4,AAPL,XNAS,US,USD,STK,0,America/New_York,"APPLE INC"

FIBBG000BFWKC0,MON,XNYS,US,USD,STK,0,America/New_York,"MONSANTO CO"

FIBBG000BKZB36,HD,XNYS,US,USD,STK,0,America/New_York,"HOME DEPOT INC"

FIBBG000BMHYD1,JNJ,XNYS,US,USD,STK,0,America/New_York,"JOHNSON & JOHNSON"

FIBBG000BPH459,MSFT,XNAS,US,USD,STK,0,America/New_York,"MICROSOFT CORP"

Core vs extended fields

By default, the securities master file returns a core set of fields:

Sid: unique security IDSymbol: ticker symbolExchange: the MIC (market identifier code) of the primary exchangeCountry: ISO country codeCurrency: ISO currencySecType: the security type. See available typesETF: 1 if the security is an ETF, otherwise 0Timezone: timezone of the exchangeName: issuer name or security descriptionPriceMagnifier: price divisor to use when prices are quoted in a different currency than the security's currency (for example GBP-denominated securities which trade in GBX will have an PriceMagnifier of 100). This is used by QuantRocket but users won't usually need to worry about it.Multiplier: contract multiplier for derivativesDelisted: 1 if the security is delisted, otherwise 0DateDelisted: date security was delistedLastTradeDate: last trade date for derivativesRolloverDate: rollover date for futures contracts

These fields are consolidated from the available vendor records you've collected. In other words, QuantRocket will populate the core fields from any vendor that provides that field, based on the vendors you have collected listings from.

You can also access the extended fields, which are not consolidated but rather provide the exact values for a specific vendor. Extended fields are named like <vendor>_<FieldName> and can be requested in several ways, including by field name (e.g. usstock_Mic):

$ quantrocket master get --symbols 'AAPL' --fields 'Symbol' 'Exchange' 'usstock_Symbol' 'usstock_Mic' --json | json2yml

---

-

Sid: "FIBBG000B9XRY4"

Symbol: "AAPL"

Exchange: "XNAS"

usstock_Mic: "XNAS"

usstock_Symbol: "AAPL"

>>> download_master_file("aapl.csv", symbols="AAPL", fields=["Symbol", "Exchange", "usstock_Symbol", "usstock_Mic"])

>>> securities = pd.read_csv("aapl.csv")

>>> securities.iloc[0]

Sid FIBBG000B9XRY4

Symbol AAPL

Exchange XNAS

usstock_Mic XNAS

usstock_Symbol AAPL

$ curl -X GET 'http://houston/master/securities.json?symbols=AAPL&fields=Symbol&fields=Exchange&fields=usstock_Symbol&fields=usstock_Mic' | json2yml

---

-

Sid: "FIBBG000B9XRY4"

Symbol: "AAPL"

Exchange: "XNAS"

usstock_Mic: "XNAS"

usstock_Symbol: "AAPL"

Use the wildcard

<vendor>* to return all fields for a vendor (see the command or function help for the available vendor prefixes):

$ quantrocket master get --symbols 'AAPL' --fields 'usstock*' --json | json2yml

---

-

Sid: "FIBBG000B9XRY4"

usstock_DateDelisted: null

usstock_FirstPriceDate: "2007-01-03"

usstock_LastPriceDate: "2020-04-03"

usstock_Mic: "XNAS"

usstock_Name: "APPLE INC"

usstock_SecurityType: "Common Stock"

usstock_SecurityType2: "Common Stock"

usstock_Sic: "Electronic Computers"

usstock_SicCode: 3571

usstock_SicDivision: "Manufacturing"

usstock_SicIndustryGroup: "Computer And Office Equipment"

usstock_SicMajorGroup: "Industrial And Commercial Machinery And Computer Equipment"

usstock_Symbol: "AAPL"

>>> download_master_file("aapl.csv", symbols="AAPL", fields="usstock*")

>>> securities = pd.read_csv("aapl.csv")

>>> securities.iloc[0]

Sid FIBBG000B9XRY4

usstock_DateDelisted NaN

usstock_FirstPriceDate 2007-01-03

usstock_LastPriceDate 2020-04-03

usstock_Mic XNAS

usstock_Name APPLE INC

usstock_SecurityType Common Stock

usstock_SecurityType2 Common Stock

usstock_Sic Electronic Computers

usstock_SicCode 3571

usstock_SicDivision Manufacturing

usstock_SicIndustryGroup Computer And Office Equipment

usstock_SicMajorGroup Industrial And Commercial Machinery And Comput...

usstock_Symbol AAPL

$ curl -X GET 'http://houston/master/securities.json?symbols=AAPL&fields=usstock%2A' | json2yml

---

-

Sid: "FIBBG000B9XRY4"

usstock_DateDelisted: null

usstock_FirstPriceDate: "2007-01-03"

usstock_LastPriceDate: "2020-04-03"

usstock_Mic: "XNAS"

usstock_Name: "APPLE INC"

usstock_SecurityType: "Common Stock"

usstock_SecurityType2: "Common Stock"

usstock_Sic: "Electronic Computers"

usstock_SicCode: 3571

usstock_SicDivision: "Manufacturing"

usstock_SicIndustryGroup: "Computer And Office Equipment"

usstock_SicMajorGroup: "Industrial And Commercial Machinery And Computer Equipment"

usstock_Symbol: "AAPL"

Finally, use "*" to return all core and extended fields:

$ quantrocket master get --symbols 'AAPL' --fields '*' --json | json2yml

---

-

Sid: "FIBBG000B9XRY4"

Symbol: "AAPL"

Exchange: "XNAS"

...

usstock_SicIndustryGroup: "Computer And Office Equipment"

usstock_SicMajorGroup: "Industrial And Commercial Machinery And Computer Equipment"

usstock_Symbol: "AAPL"

>>> download_master_file("aapl.csv", symbols="AAPL", fields="*")

>>> securities = pd.read_csv("aapl.csv")

>>> securities.iloc[0]

Sid FIBBG000B9XRY4

Symbol AAPL

Exchange XNAS

...

usstock_SicIndustryGroup Computer And Office Equipment

usstock_SicMajorGroup Industrial And Commercial Machinery And Comput...

usstock_Symbol AAPL

$ curl -X GET 'http://houston/master/securities.json?symbols=AAPL&fields=%2A' | json2yml

---

-

Sid: "FIBBG000B9XRY4"

Symbol: "AAPL"

Exchange: "XNAS"

...

usstock_SicIndustryGroup: "Computer And Office Equipment"

usstock_SicMajorGroup: "Industrial And Commercial Machinery And Computer Equipment"

usstock_Symbol: "AAPL"

Limit by vendor

In some cases, you might want to limit records to those provided by a specific vendor. For example, you might wish to create a universe of securities supported by your broker. For this purpose, use the --vendors/vendors parameter. This will cause the query to search the requested vendors only:

Don't confuse --vendors/vendors with --fields/fields. Limiting --fields/fields to a specific vendor will search all vendors but only return the requested vendor's fields. Limiting --vendors/vendors to a specific vendor will only search the requested vendor but may return all fields (depending on the --fields/fields parameter). In other words, --vendors/vendors controls what is searched, while --fields/fields controls output.

Security types

The following security types or asset classes are available:

| Code | Asset class |

|---|

| STK | stocks |

| ETF | ETFs |

| FUT | futures |

| CASH | FX |

| IND | indices |

| OPT | options (see docs) |

| FOP | futures options (see docs) |

| BAG | combos (see docs) |

With the exception of ETFs, these security type codes are stored in the SecType field of the master file. ETFs are a special case. Stocks and ETFs are distinguished as follows in the master file:

| SecType field | Etf field |

|---|

| ETF | STK | 1 |

| Stock | STK | 0 |

More detailed security types are also available from many vendors. See the following fields:

edi_SecTypeCode and edi_SecTypeDescfigi_SecurityType and figi_SecurityType2sharadar_Categoryusstock_SecurityType and usstock_SecurityType2

Universes

Once you've collected listings that interest you, you can group them into meaningful universes. Universes provide a convenient way to refer to and manipulate groups of securities when collecting historical data, running a trading strategy, etc. You can create universes based on exchanges, security types, sectors, liquidity, or any criteria you like.

The most common way to create a universe is to download a master file that includes the securities you want, then create the universe from the master file:

$ quantrocket master get --exchanges 'XHKG' --sec-types 'STK' --outfile hongkong_securities.csv

$ quantrocket master universe 'hong-kong-stk' --infile hongkong_securities.csv

code: hong-kong-stk

inserted: 2216

provided: 2216

total_after_insert: 2216

>>> from quantrocket.master import download_master_file, create_universe

>>> download_master_file("hongkong_securities.csv", exchanges=["XHKG"], sec_types="STK")

>>> create_universe("hong-kong-stk", infilepath_or_buffer="hongkong_securities.csv")

{'code': 'hong-kong-stk',

'inserted': 2216,

'provided': 2216,

'total_after_insert': 2216}

$ curl -X GET 'http://houston/master/securities.csv?exchanges=XHKG&sec_types=STK' > hongkong_securities.csv

$ curl -X PUT 'http://houston/master/universes/hong-kong-stk' --upload-file hongkong_securities.csv

{"code": "hong-kong-stk", "provided": 2216, "inserted": 2216, "total_after_insert": 2216}

Using the CLI, you can create a universe in one-line by piping the downloaded CSV to the universe command, using --infile - to specify reading the input file from stdin:

$ quantrocket master get --exchanges 'XCME' --symbols 'ES' --sec-types 'FUT' | quantrocket master universe 'es-fut' --infile -

code: es-fut

inserted: 12

provided: 12

total_after_insert: 12

Using the Python API, you can filter the master file in pandas, or using QGrid, then save the DataFrame to CSV and upload it:

>>> download_master_file("us_stk.csv", exchanges=["XNYS", "XNAS", "ARCX", "XASE"], sec_types="STK", fields="usstock*")

>>> securities = pd.read_csv("us_stk.csv")

>>> adrs = securities[securities.usstock_SecurityType2=="Depositary Receipt"]

>>> adrs.to_csv("adrs.csv")

>>> create_universe("us-adrs", infilepath_or_buffer="adrs.csv")

{'code': 'us-adrs',

'provided': 669,

'inserted': 669,

'total_after_insert': 669}

You can also manually edit a CSV file, deleting rows you don't want, before uploading the file to create a universe.

When uploading a file to create a universe, only the Sid column matters. This means the CSV file need not be a master file; it can be any file with a Sid column, such as a CSV file of fundamentals.

You can also create a universe from existing universes:

$ quantrocket master universe 'asx' --from-universes 'asx-sml' 'asx-mid' 'asx-lrg'

code: asx

inserted: 1604

provided: 1604

total_after_insert: 1604

>>> from quantrocket.master import create_universe

>>> create_universe("asx", from_universes=["asx-sml", "asx-mid", "asx-lrg"])

{'code': 'asx',

'inserted': 1604,

'provided': 1604,

'total_after_insert': 1604}

$ curl -X PUT 'http://houston/master/universes/asx?from_universes=asx-sml&from_universes=asx-mid&from_universes=asx-lrg'

{"code": "asx", "provided": 1604, "inserted": 1604, "total_after_insert": 1604}

Universes are static. If new securities become available that you want to include in your universe, you can add them to an existing universe using

--append/append=True:

$ quantrocket master get --exchanges 'XCME' --symbols 'ES' --sec-types 'FUT' | quantrocket master universe 'es-fut' --infile - --append

code: es-fut

inserted: 22

provided: 34

total_after_insert: 34

>>> download_master_file("es_fut.csv", exchanges="XCME", symbols="ES", sec_types="FUT")

>>> create_universe("es-fut", infilepath_or_buffer="es_fut.csv", append=True)

{'code': 'es-fut',

'provided': 34,

'inserted': 22,

'total_after_insert': 34}

$ curl -X GET 'http://houston/master/securities.csv?exchanges=XCME&sec_types=FUT&symbols=ES' > es_fut.csv